Sixfold Content

Sixfold News

Sixfold Partners with Adnovum

Sixfold is teaming up with Adnovum, the Swiss technology and consulting company specializing in secure digital transformation for the insurance industry.

.png)

Explore all Resources

Stay informed, gain insights, and elevate your understanding of AI's role in the insurance industry with our comprehensive collection of articles, guides, and more.

Sixfold’s AI Governance Program

Sixfold's AI Governance Program is an ongoing internal process for identifying, assessing, and mitigating the risks of deploying AI. It is organized into five focus areas with named owners, defined deliverables, and a regular cadence of reviews.

Sixfold's AI Governance Program is an ongoing internal process for identifying, assessing, and mitigating the risks of deploying AI. It is organized into five focus areas with named owners, defined deliverables, and a regular cadence of reviews.

This post explains what it covers and why we built it the way we did.

Why we established this now

Responsible AI is not a new territory for Sixfold. We have been thinking carefully about how we build and deploy AI in insurance underwriting since the day we launched in May 2023.

The honest answer to "why now?" is this: we wanted to formalize what we were already doing, and the regulatory environment gave us both the mandate and the structure to do it well.

The reality is that AI legislation is different from most compliance frameworks insurers and vendors are familiar with. AI compliance is process-based. It is about building the organizational muscle to understand whether your systems could cause harm, and then evaluating that question consistently over time.

That kind of ongoing work requires real ownership, clear processes, and people who are explicitly accountable for staying on top of it. We also made a deliberate choice not to chase specific legislation line by line. AI laws are still in progress across jurisdictions and contested at every level. Instead, we anchored the program around established industry reference points:

- The NIST AI Risk Management Framework (AI RMF 1.0), an industry framework that gives durable guidance for risk and oversight.

- The EU AI Act's high-risk provider requirements (Articles 9-17), which has concrete expectations on documentation, testing, and accountability

That approach is more durable and more honest about where regulation actually is today.

From principles to practice

Sixfold has had a set of responsible AI principles since early on, we formalized and launched our first Responsible AI approach in 2024 and established and launched an updated version in 2025. What the governance program does is translate those principles into organized action.

"Principles are well and good, but without action words they're just hot air."

– Noah Grosshandler, Product Manager and AI Governance Program Lead at Sixfold

The program gives us specific owners, defined deliverables, and a cadence for revisiting each area. It creates the connective tissue between what we believe and what we actually do. And critically, it builds the structure to change over time, because the landscape will constantly move forward, our product will change, and what it means to apply those principles responsibly will change with them.

We are building toward a complete Annex IV documentation package. That is the concrete output regulators and enterprise customers ask for. The program also addresses requirements to prevent algorithmic discrimination in much of the emerging global AI legislation, especially relevant to our customers operating in the life and health space. Bias and fairness testing is part of the program, with methodology tailored to each line of business.

How the program is structured

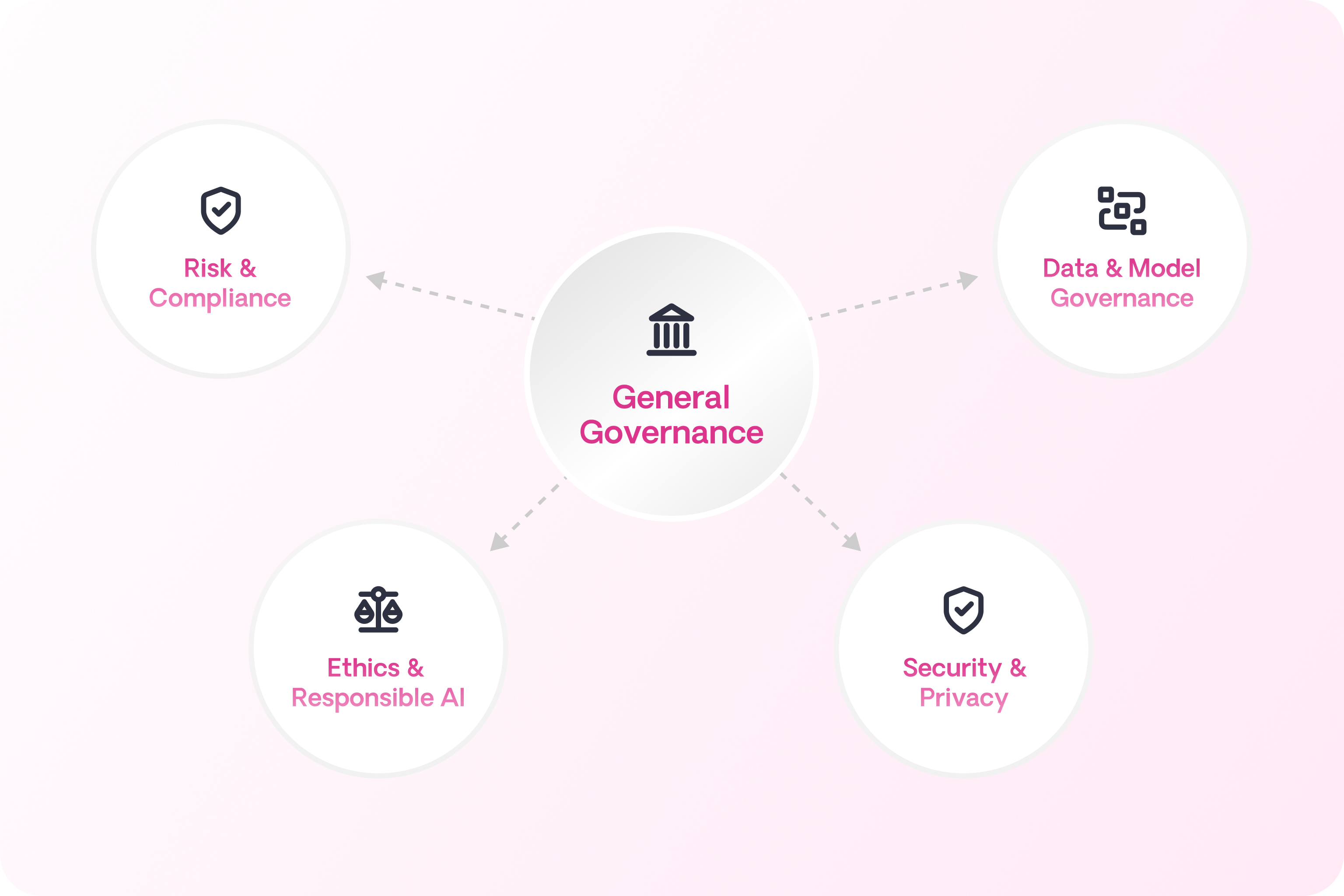

The program is organized around five focus areas, each with its own owners and activities:

General governance covers the program itself: keeping the right people informed, running annual retrospectives, and ensuring continuity when things change.

Risk and compliance is responsible for identifying and tracking AI-specific risks, reporting on them quarterly, and handling any ad hoc mitigation or incident response as needed.

Data and model governance focuses on the data used to develop and train our systems. This means understanding where data comes from, confirming it is used ethically, and being able to clearly explain how system behavior relates to the inputs that shaped it.

Ethics and responsible AI covers fairness, explainability, and human oversight. It is the function that asks where humans should stay in the loop, what our position is on where AI should and should not operate, and whether the way our system works is genuinely ethical, not just technically sound.

Security and privacy handles the technical infrastructure: making sure the underlying systems meet the security and privacy requirements of what we are building.

Each area has its own cadence. Risk and compliance runs quarterly reviews. The ethics function does the same, looking at new functionality and evaluating human oversight design. Between those cycles, the full group of program owners meets bi-weekly to stay coordinated.

"The different areas of focus don't need to change when a new regulation drops or a new product drops, we can slot new requirements into the existing structure."

– Noah Grosshandler, Product Manager and Facilitator of the AI Governance Program

One thing worth being explicit about: having dedicated program owners does not mean this is only their responsibility. At the end of the day, the company is accountable. Every person at Sixfold is responsible for building software that is honest, transparent, and ethical. The program owners exist to maintain the expertise and the process rigor that makes that possible at scale.

What this means for our customers

.png)

We have customers in North America, EMEA, South America and Australia today. Across the globe our customers face their own compliance pressures as deployers, and we can support them with that. Many of them are being asked by their own regulators and partners to demonstrate responsible AI practices, and most are still working out what that means for them.

The governance program helps us support our customers directly:

- When a customer receives a compliance questionnaire about their AI vendor, we will have clear and documented answers.

- When they are trying to build their own responsible AI stance, we can share what we have learned. We are not just handing over a package of documents; we are trying to be a resource to support them build out their own AI Governance practices.

- Our customer success team works with each customer individually, because our customers operate across different jurisdictions, different product lines, and different regulatory environments. There is no one-size-fits-all approach here.

"The company is accountable. Just because there is this program and this charter doesn't mean that it is not every single person at this company's job to make sure that what we're building is built ethically, built honestly, built transparently."

– Noah Grosshandler, Product Manager and AI Governance Program Lead

What comes next

In the near term, we are focused on achieving the right baseline: making sure all documentation is in place, all processes are codified, and we are ready for the enforcement deadlines that matter.

The longer-term goal is bigger. We want to continue to be at the forefront of what responsible AI development actually looks like in insurance, not just compliant on paper but genuinely ahead of the problem. That means working with bodies like the NAIC to share what has worked and what has not. And it means making sure the program itself stays modular and adaptable, so when new regulations drop or our product evolves, we can slot in new requirements without rebuilding from scratch.

We built the governance program because Responsible AI is a core part of Sixfold. The structure the program provides is how we make sure that commitment holds.

Questions about Sixfold's AI governance approach? Reach out to your customer success representative or get in touch with our team.

Learn more about our commitment to being a Responsible AI organization here.

━━━

FAQ

What is an AI Governance Program? An AI Governance Program is a structured and ongoing internal process for identifying, assessing and mitigating risks. Sixfold developed the program to mitigate the risks of deploying our AI in insurance underwriting. It is organized into five focus areas, each with named owners, defined deliverables, and a regular review cadence.

Why did Sixfold create a formal AI governance program? To formalize responsible AI practices it had been following since launch, and to meet the requirements of emerging AI legislation. AI compliance is process-based and requires ongoing evaluation.

Is the AI Governance Program required by law? Not by a single law, but most emerging AI regulations require some form of oversight program. Rather than chasing specific legislation, Sixfold anchored the program in established frameworks like NIST AI RMF, which is more durable given how frequently AI laws are still changing.

How often does Sixfold review its AI governance program? Program owners meet bi-weekly. Risk and compliance runs quarterly reviews, as does the ethics and responsible AI function. Incident response is handled ad hoc as needed.

How does the AI Governance Program help insurance customers with their own compliance? Sixfold gives customers documented answers to vendor compliance questionnaires and a framework they can learn from as they build their own responsible AI practices. Sixfold's customer success team works with each customer individually given differences in jurisdiction and product line.

Who is responsible for AI governance at Sixfold? The whole company. Named owners across the five focus areas maintain the expertise and process rigor, but every person at Sixfold is accountable for building software that is honest, transparent, and ethical.

Sixfold Partners with Microsoft Azure

Sixfold and Microsoft are teaming up to make AI in insurance underwriting faster to deploy and easier to integrate within the Microsoft Azure Marketplace.

Exciting news! Sixfold is officially live on Microsoft Marketplace, the trusted online destination for enterprises to discover, purchase and deploy cloud solutions, AI apps and agents directly within their Microsoft ecosystem.

Carriers and MGAs can now discover and deploy Sixfold directly through Microsoft Marketplace with smooth integration and streamlined management

Now meeting insurers within their existing technology ecosystem. This partnership simplifies integration, streamlines management and reduces implementation timelines. Allowing carriers to leverage their existing Azure commitments to accelerate time-to-value.

Why Microsoft

Our mission at Sixfold has been to make AI adoption in insurance faster and more accessible. A big part of achieving this is meeting insurers where they already are, inside a technology infrastructure they know and trust.

By joining Microsoft Marketplace we’re doing exactly that. This partnership simplifies procurement, streamlines integration and lets insurers apply their existing Azure commitments toward deploying Sixfold. Removing the friction that typically slows adoption in enterprises.

"This collaboration with Microsoft marks a significant milestone in our growth journey. By integrating with the Microsoft Marketplace, we're making Sixfold and our Underwriting AI brain easier to discover and deploy. Insurers can implement our capabilities directly within their existing Azure environment, reducing integration complexity and accelerating time-to-value by streamlining procurement through their established Microsoft relationship."

– Roger Ferrandis, Head of Partnerships at Sixfold

What Changes for Insurers

Until now, adopting Sixfold meant navigating a standalone procurement process with separate contracts, separate timelines, and separate budget approvals. For enterprise insurers, that friction adds weeks and creates unnecessary approval cycles before a single submission gets processed.

With Sixfold on Microsoft Marketplace, that changes. Carriers and MGAs can now:

- Discover and deploy Sixfold directly within their existing Azure environment

- Apply existing Azure cloud commitments toward their Sixfold adoption

- Eliminate redundant approval cycles by consolidating procurement within an established Microsoft relationship

- Move faster from decision to deployment with fewer operational barriers

“We’re pleased to welcome Sixfold to Microsoft Marketplace,” said Cyril Belikoff, vice president, Microsoft Azure Product Marketing. “Marketplace connects trusted solutions from global partners with customers worldwide, making it easy to find and deploy apps that work seamlessly with Microsoft products.”

How to Get Started

Sixfold is trusted by insurers globally including Zurich, Mosaic, Skyward, Generali and many more. Together Sixfold has processed over one million submissions for carriers writing more than $265B in premiums.

Ready to get started? Explore Sixfold on Microsoft Marketplace

Questions about the partnership or curious to learn more about Sixfold? Reach out to your customer service representative or get in touch with our team

━━━

Frequently asked questions

How long does it take to deploy Sixfold through Microsoft Marketplace?

Deployment timelines are significantly shorter through Microsoft Marketplace than through standalone procurement. Traditional Sixfold adoption requires separate contracts, implementation cycles, and budget approvals each adding weeks to the process. By routing through an existing Azure relationship, insurers eliminate those parallel approval cycles and can simplify the process from procurement to deployment within their established cloud infrastructure.

What's the difference between buying Sixfold directly versus through Microsoft Marketplace?

Purchasing through Microsoft Marketplace lets insurers apply existing Azure cloud commitments to Sixfold, whereas a direct purchase requires a separate budget allocation and procurement cycle. The core AI underwriting functionality is the same either way. The Marketplace route removes the need for additional vendor approval processes and integrates billing within an insurer's existing Microsoft spend, which is a meaningful operational difference for enterprise finance and IT teams.

How much more productive do underwriters become after adopting AI underwriting tools?

Underwriters using Sixfold process up to 50% more submissions without additional manual research or administrative work, according to Sixfold's published performance data. That productivity gain translates to up to 30% more Gross Written Premium (GWP) per underwriter. The efficiency comes from automated submission screening and appetite-aligned insights, freeing underwriters to focus on judgment-intensive decisions and broker relationships.

What compliance and security standards does Sixfold meet for enterprise insurers?

Sixfold's deployment within Microsoft Azure inherits the governance, security, and compliance controls built into the Azure platform, which is designed to meet enterprise and regulated-industry requirements. This matters in insurance because carriers operate under strict data governance obligations. Deploying within an existing Azure environment means compliance postures already approved by IT and legal teams carry over, rather than requiring a separate security review for a new vendor environment.

How do AI underwriting tools handle transparent sourcing of recommendations?

Sixfold provides appetite-aligned insights with transparent, cited sources meaning underwriters can see the reasoning and reference material behind each recommendation, not just an output. This auditability is a specific design requirement in insurance, where underwriting decisions must be defensible to regulators, reinsurers, and internal governance teams.

.png)

New Life & Health Narrative: Meeting Underwriters At Every Step

Narrative is Sixfold's new capability for Life & Health, giving underwriters a customizable, structured summary at initial review. It surfaces the key details needed to move a case forward, reducing back and forth and simplifying triage before deeper analysis.

Life & Health underwriting is a constant balance between speed and quality: getting the right coverage to customers before they go somewhere else. One missed detail can change the whole quote. But the longer pricing takes, the greater the risk of applicant drop-off.

Accelerated underwriting helps with simpler cases that don't require additional evidence, but as recently reported by Gen Re, up to 88% of cases still need some type of human review.

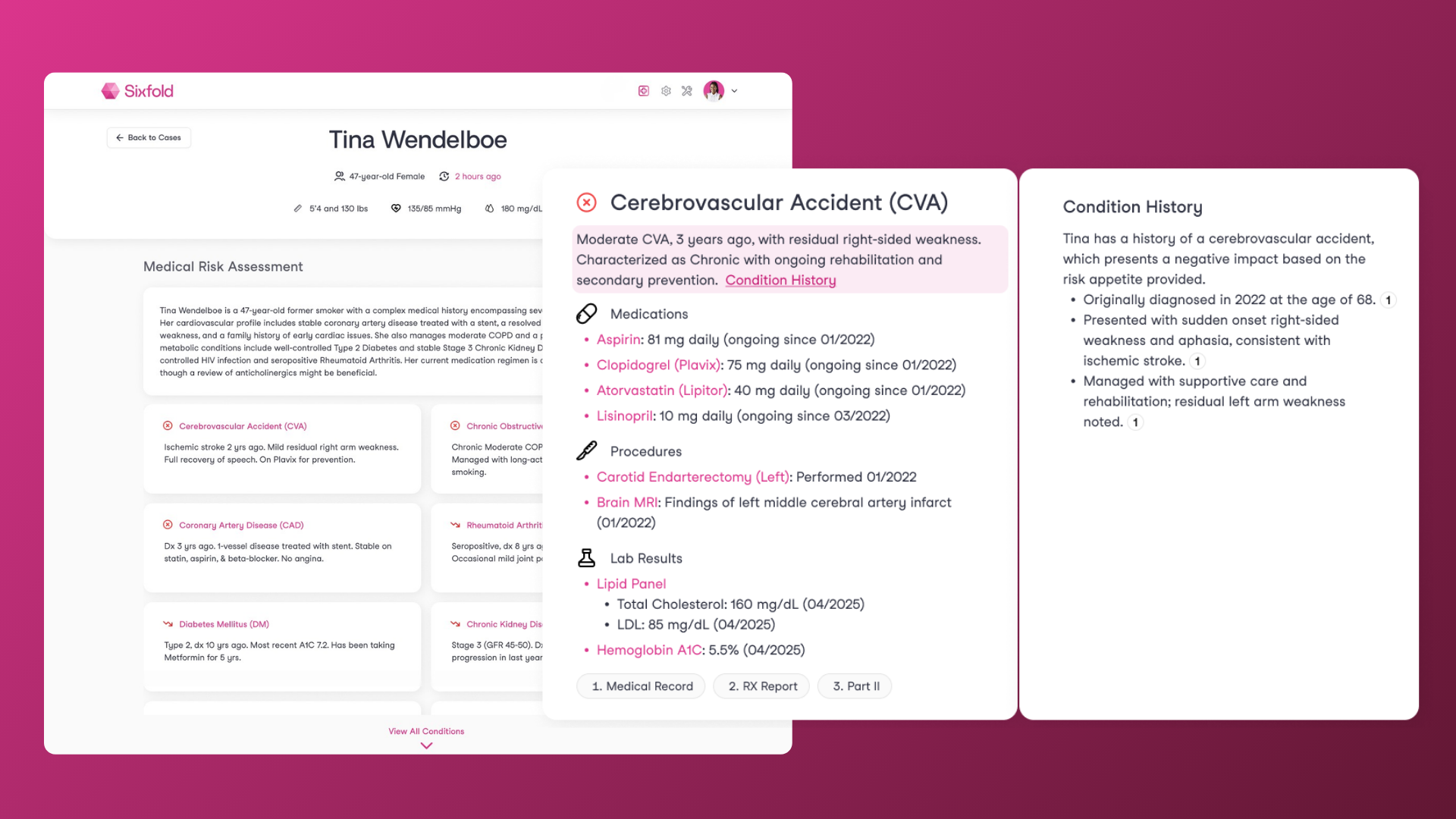

Those cases still go through the same workflow: sorting through documents at initial review, going back to the advisor or broker when something's missing, and when the case is finally ready, reading through hundreds of pages of medical records like EHRs, APS, and lab results to piece together the full health picture before making a decision.

It's a lot of steps and a big manual workload that keeps underwriters from bringing in more premiums and getting back to customers faster with better coverage. That’s where Sixfold comes in; underwriting AI built to help at every step of the process, bringing together the details that matter to your team, and now with our new Narrative, a tailored initial analysis that simplifies triage and reduces the unnecessary back and forth, before the deeper analysis.

The First Look At a Case

At the initial review, underwriters are trying to answer one question: Do I have what I need to move this case forward? Most of the time, that means going through multiple documents just to find out, and when something's missing, at least 30 minutes are spent on a case they'll have to come back to later.

Our new Narrative capability for Life & Health is a focused, structured case summary tailored to what matters to your underwriting team, surfacing the information you want to see upfront to help underwriters with triage and to figure out next steps. Sixfold’s AI agents read through all the case documents, application, APS and labs, and pull together the relevant details into one structured view, so underwriters don't have to. Each narrative is configurable to your team's specific workflow, so what gets surfaced reflects what actually matters for your decisions.

Imagine a case where an applicant has hypertension or a history of elevated cholesterol. The underwriter immediately needs to know if they have all the information about blood pressure and what meds they're on. Does this applicant smoke? What is their family history? This is information they need before they even begin to assess the case.

The Narrative gives you exactly the information you need to know before you move on.

"We want to support underwriters at every point in their workflow. Sometimes that means a focused Narrative to help with triage and next steps. Sometimes that means a deep condition analysis across hundreds of pages. The point is, wherever an underwriter is in the process, Sixfold is there to help."

— Noah Grosshandler, Product Manager at Sixfold

On the reinsurer side, it's a similar story. Underwriters are spending precious time just organizing loosely assembled submissions from cedents because most times the cases come in an inconsistent format, requiring additional questions before quoting, many of which wouldn't even be necessary if the information were clearly structured upfront.

With Narrative, these questions are answered upfront. Fewer emails back and forth with brokers and faster responses.

The Full Clinical Story: Conditions Analysis

After triaging and making sure a case is ready to quote, underwriters go back to the manual review of hundreds of pages of medical records, trying to spot that one detail that will change the whole quote. It's pretty much like detective work, finding how a medicine connects to a diagnosis and what the impact is on that patient, trying to piece together the full medical story. Assessing history, severity, and how well a diagnosis is managed. Meanwhile, customers are waiting for a response.

That's where Sixfold's in-depth Conditions Analysis comes in. When the case is ready for a full review, our Conditions Analysis connects all the medical pieces, bringing together medications, labs, procedures, and diagnoses under each condition so underwriters can see the full clinical picture without jumping between documents. It highlights what's relevant to your underwriting guidelines, shows how conditions have progressed over time, and surfaces the clinical data that matters most for your decisions.

One example is a case where an applicant is taking Spironolactone, a common medication used to control high blood pressure, but also prescribed for hormonal acne. An underwriter would have to go through lengthy pages of documentation to find what dosage they're on, how long they've been taking it, and what it's actually being prescribed for. And that's just one medication. For complex cases, underwriters are doing this across multiple conditions with years of medical history.

With our Conditions Analysis, underwriters can spend their time on decision-making rather than document review and data gathering. Customers get faster and better quotes. Guardian, for example, saw a 50% reduction in review time using Sixfold.

When Speed is What Matters

Some of our customers work with higher-volume lines like structured settlements and annuities and often process over 10,000 cases per year; they don’t always need an in-depth Condition Analysis to move a case to the next step.

There are fewer key risk details that influence the analysis, so they have been able to use Narrative to move forward on up to 70% of their cases, consulting our full Conditions Analysis only for the most complex ones. For some teams, Narrative handles most of the heavy lifting of manual review.

"One of our customers built Narrative into their workflow for initial case review. It helps their underwriters get up to speed quickly and know exactly what needs attention. When a case requires deeper analysis, they move to our full Conditions Analysis, but Narrative gives them that strong starting point." — Justin Sorce, Customer Success Manager at Sixfold

Confident Underwriting Decisions

Whether it's a quick initial review or a deep dive into a complex case, Sixfold is there to help your team get to a confident underwriting decision faster.

And it works where your team already works, whether that's in our UI, a workbench, or a policy admin system. Every insight ties back to the source documents, so underwriters can verify what they're seeing. Built for underwriting since day one. HIPAA and SOC 2 certified, with single-tenant environments to keep your data secure. Rigorous AI fairness testing to meet evolving regulatory standards and grounded in our Responsible AI principles.

Introducing Institutional Intelligence

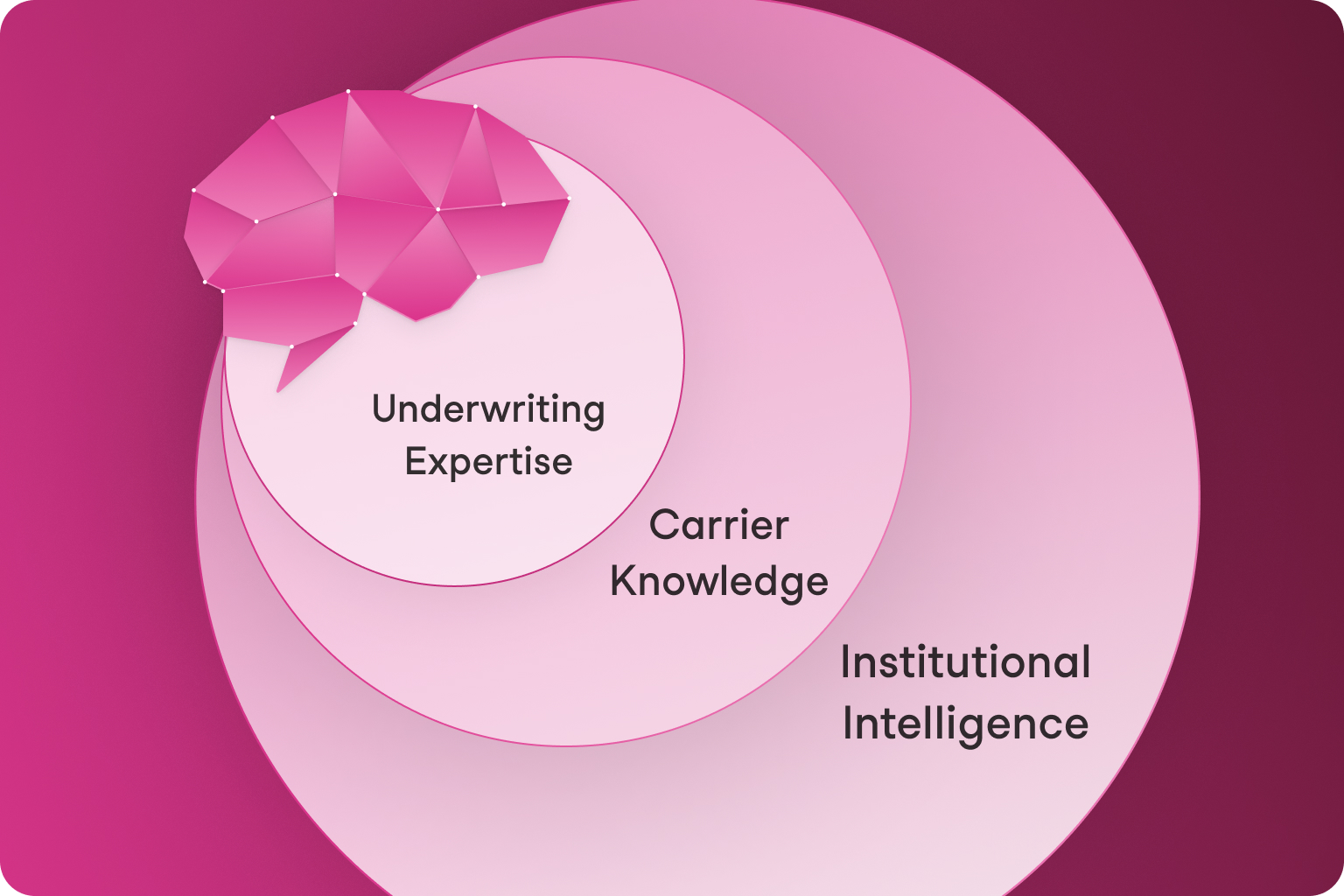

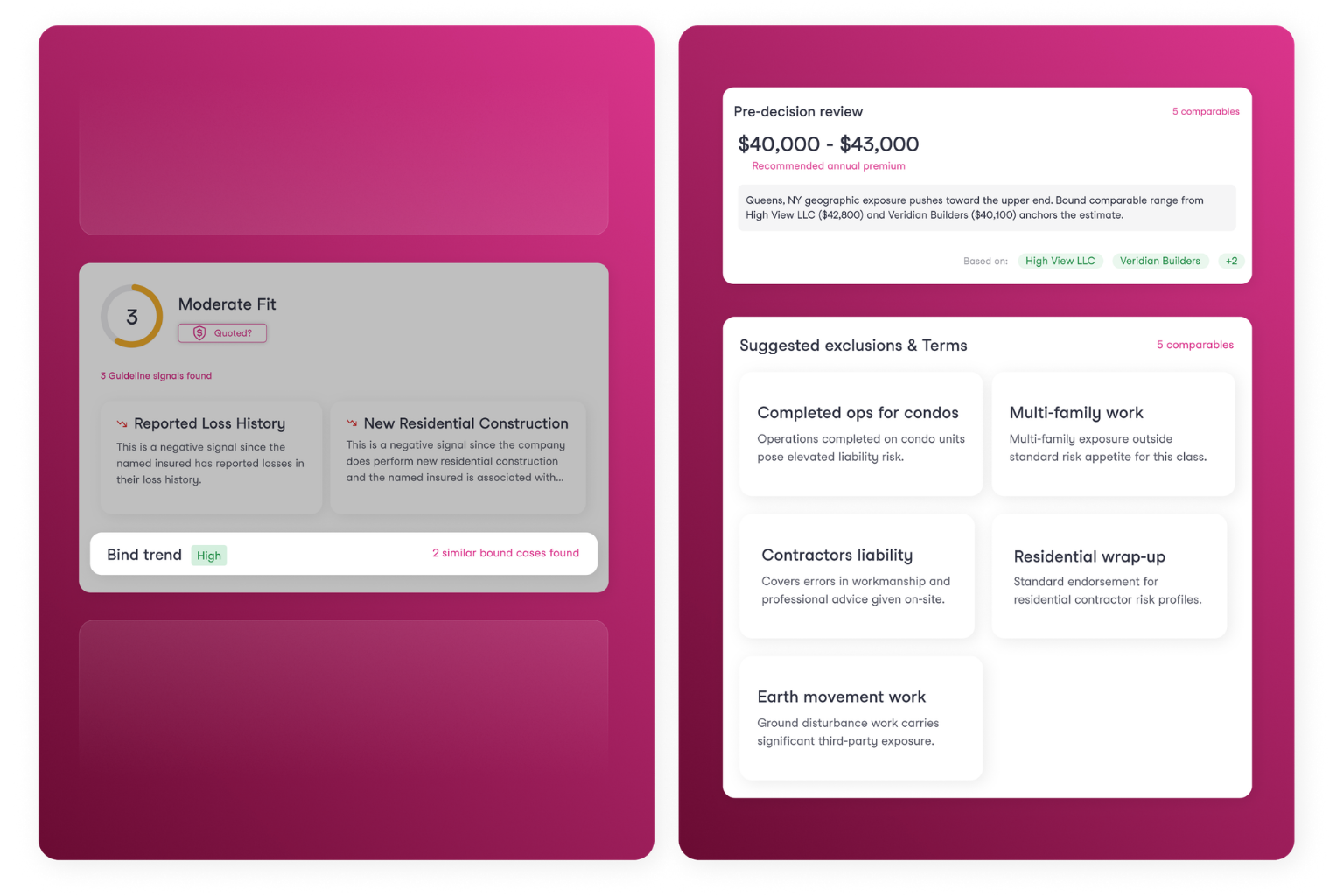

Institutional Intelligence is an advancement in Sixfold's Underwriting Brain connecting risks to the decisions and outcomes that follow. New types of insights and recommendations surface to elevate underwriters and keep you competitive without losing sight of portfolio balance.

Underwriting judgment is one of the most valuable assets an insurer has, and one of the most fragile. Great underwriters develop a feel for risk that goes beyond guidelines, judgment built from thousands of decisions over a career. It's what shapes portfolio quality and shows up in loss ratios. But that judgment is unique to each individual underwriter. The underwriters around them don't have access to it, and when they retire or move on, it's gone. Meanwhile, the portfolio intelligence that should be informing every decision: how similar risks have performed, which broker relationships produce adverse selection, where losses concentrate—doesn't reach underwriters where and when they need it.

AI underwriting changes what’s possible here. Every submission processed leaves behind structured and unstructured traces that are sometimes not easily identified: risk factors extracted, reasoning paths, agent recommendations. When connected to the decisions and outcomes that follow, those traces form a learning loop, and Institutional Intelligence builds.

When this intelligence is properly put to use, your underwriting gets smarter. New underwriters ramp faster, operating on insights with expertise and portfolio context built in. Decisions become more consistent across the team, grounded in collective knowledge rather than individual memory. Expertise doesn't walk out the door when senior underwriters move on. The entire organization is upleveled with knowledge that used to live in silos.

What We’re Building

Strengthening Our Foundation

Sixfold's Underwriting Brain is at the center of everything we do. We’re deepening its expertise through specialty training and certification courses—building a sharper understanding of how underwriters work end-to-end and the nuances across lines of business. The Brain then captures carrier-specific guidelines and appetite with its patented appetite ingestion engine, refined over time through an underwriter feedback loop. Playbooks go further, encoding complex references, ontologies, and documented practices unique to your organization, so Sixfold doesn't just understand underwriting, it understands how you underwrite.

Building Institutional Intelligence

The Brain then builds Institutional Intelligence through a learning loop, the first phase of which is available today. Carriers can feed decision and outcome data back into Sixfold through enhanced case statuses and the Sixfold API. This data further shapes Sixfold’s understanding of your appetite and what wins business.

The learning loop expands as more data flows in. We're working closely with customers to build the data connections that matter most: broker performance, referral decisions, policy structure, losses. The goal is Institutional Intelligence that moves the metrics carriers actually manage to: winnability, portfolio performance, loss ratios. And because we deploy each customer in an isolated environment, the intelligence captured is yours alone.

Agentic self-learning comes next, identifying patterns across submissions, learning which signal combinations predict losses, the Brain self-refining its understanding of your desired portfolio over time. We're building toward self-learning deliberately, with the governance, performance monitoring, and controls enterprise insurers expect from Sixfold.

Putting It To Work

A new agentic backend allows these layers of knowledge to be put to work. The Brain and the agents behind it build a research and assessment plan specific to each submission that comes in, reasoning through what information it needs and what actions it needs to take. Insights and recommendations are surfaced to underwriters, actions are taken, all grounded in the expertise and Institutional Intelligence that sits in the Brain. As Institutional Intelligence deepens, so does what Sixfold can do for your team.

Current customers can begin feeding decision and outcome data into Sixfold today. Contact your Customer Success Manager to learn more.

Sixfold is actively conducting research sessions to help shape the future of our product. If you're interested in participating, reach out to us at hello@sixfold.ai.

Not yet a customer? Request a demo.

At Lloyd’s: The Future of Underwriting

Sixfold hosted an event at Lloyd’s in London focused on the next generation of underwriters, featuring speakers and insights from Berkley, Victor Insurance, Generali GC&C, and Torch Underwriting.

Yesterday, Sixfold hosted an event at Lloyd’s in London on the future of underwriting work.

The event started with a keynote from Dr. Naeema Pasha on the future of work across industries, along with findings from her research with Allianz. One of the key takeaways was that the future of insurance still needs to be focused on people. With AI becoming more present, the question is how to build trust, support empathy and skills development, and design roles for the next generation of talent.

“What stood out in my research with Allianz was how much people in the insurance industry care and are passionate about the industry and its future.“

─ Dr. Naeema Pasha, Researcher, Author and Writer

There was also a great question from an aspiring underwriter asking what skills are needed today. Soft skills came up a lot, things like curiosity and adaptability, but also something simple: get out there, engage with people in the industry, and ask questions.

At one point, the audience was asked if they would let a robot cut their hair. Most people wouldn’t. It’s a simple example, but it comes back to trust.

The panel discussion featured Simon Parris, CUO at Victor Insurance; John Enright, COO at Berkley; and Raoul Carlos, Founder & CEO at Torch Underwriting, and focused on whether this is the last generation of traditional underwriters.

They started by sharing where they are today when it comes to AI implementation. A clear theme was that adoption and engagement from underwriters really matters. Agentic AI is moving fast with a lot of potential, and there was a lot of discussion around how AI can improve broker relationships and risk assessments as a whole. Raoul talked about building AI into their foundation from day one, including the data layer needed to support institutional intelligence over time.

When it came to impact of AI, the conversation went beyond speed. Things like memorable engagement with brokers, pricing power through stronger relationships, more personal service, net promoter score, and the ability to think outside the box all came up. Better service and better products as well.

On hiring the future workforce, Raoul mentioned looking for curiosity and passion. Where the role used to be heavily focused on data wrangling, today it is much more about judgment, adaptability, creativity, and critical thinking. John highlighted integrity, trust, relationship skills, and the ability to work with a new toolkit. Simon mentioned market connections, strong underwriting fundamentals, and openness to new technology.

“The mechanics of how we work are changing, but this has happened hundreds of times throughout history.”

─ Raoul Carlos, Founder & CEO @Torch Underwriting

When asked what the underwriting role of the future might be called, the panel largely agreed it is still underwriting. Portfolio underwriting was mentioned, as well as next generation underwriter, but the core remains the same. From the audience, there were also questions around how to enter the industry. It is not just about academics. Apprenticeships matter. Continuous learning matters. And building teams with different perspectives and backgrounds still really matters.

On dealing with skepticism internally around AI, it was acknowledged that change can be uncomfortable. The advice was to make it part of the conversation, share examples, and host workshops. For insurance executives looking to learn more about AI, the message was to experiment and try it firsthand. Get into vibe coding, step outside of the comfort zone, and understand the opportunities by actually using the tools.

Lastly, Gianfilippo Giannini, Global Technical Coordinator Cyber Risk at Generali GC&C, took the stage for a Q&A and shared why they started looking for an AI vendor in the first place. They were operating in a soft market and needed to handle more submissions with the same headcount.

He also highlighted the importance of bringing underwriters in from the very beginning. The decision to choose Sixfold came down to security, privacy, a strong responsible AI framework, and a future proof roadmap, but also that the solution was clearly built for underwriting.

“Sixfold spoke the same language as us.”

─ Gianfilippo Giannini, Global Technical Coordinator Cyber Risk @Generali GC&C

There was some early skepticism from users, but once underwriters saw how it supported their day to day work, feedback quickly became very positive. At one point, underwriters who were not part of the pilot started asking when they could start using Sixfold as well.

Looking ahead, the focus for Generali GC&C when it comes to AI in underwriting is on data driven underwriting, with better visibility into portfolio trends and broker success rates.

Scaling AI in Underwriting: Lessons from Skyward & Zurich

Most insurers have run an AI pilot. Far fewer have successfully scaled it. At a Sixfold webinar, Amy Nelsen at Zurich North America and Jim Mormile at Skyward Specialty shared what that journey actually looks like.

Most insurers have run an AI pilot. Far fewer have scaled one. At a recent Sixfold webinar, two carriers shared what it actually took to get there, and what production at scale really looks like.

Amy Nelsen, Head of UW Operations for US Middle Market at Zurich North America, and Jim Mormile, President of Professional Lines at Skyward Specialty, spoke about their experience rolling out Sixfold across their organizations.

Skyward is now live across more than 10 product lines with nearly 100 underwriters. Zurich has deployed across 30 US offices, with more than 200 underwriters using Sixfold in their daily workflow.

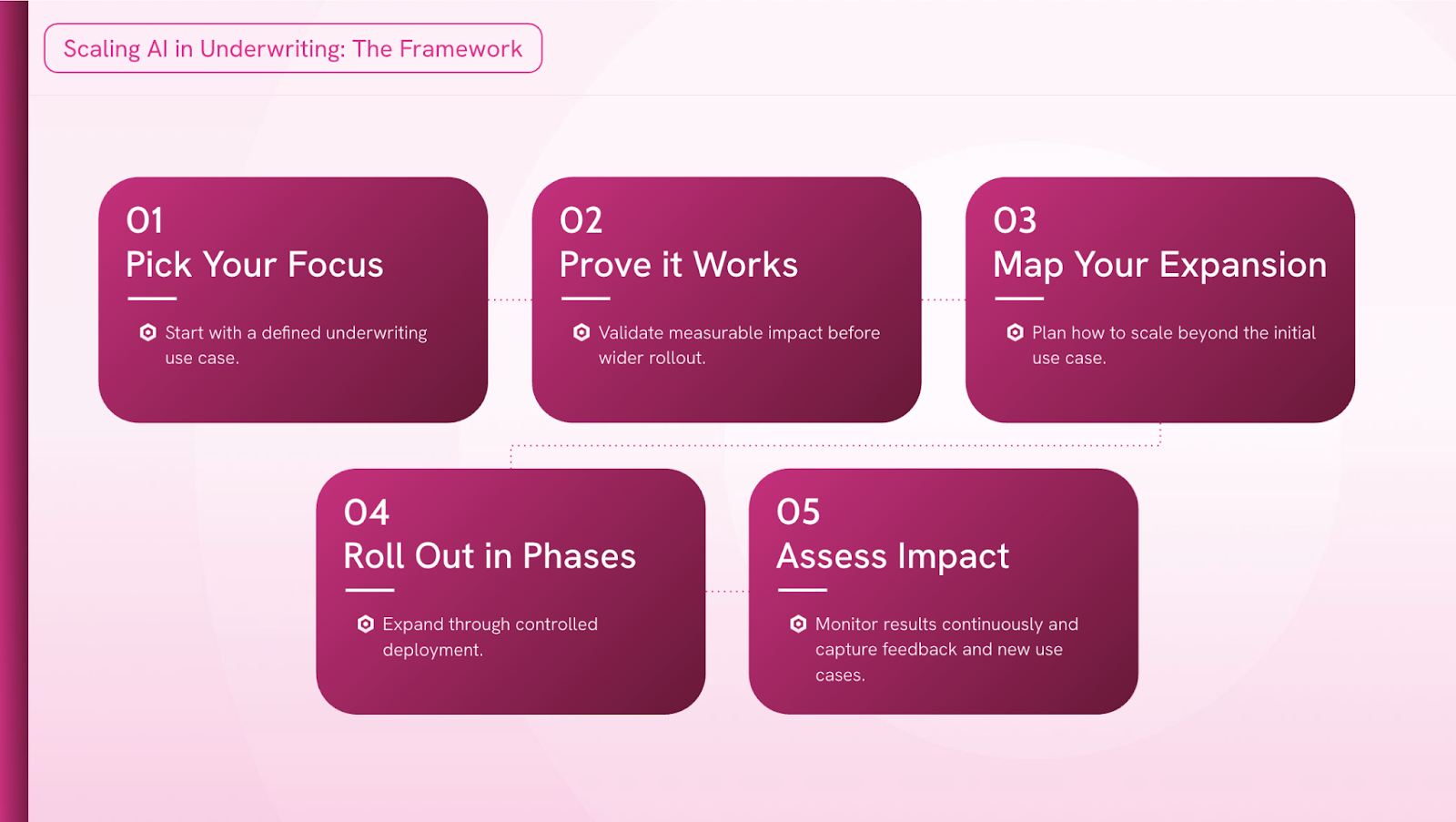

We walked through our five-step scaling framework with both of them to hear, in their own words, how each stage played out in practice.

Step 1: Pick Your Focus

The biggest mistake teams make is starting with AI and working backwards to find a problem. Both Amy and Jim were deliberate about doing the opposite, finding a genuine pain point first and then asking whether AI could solve it.

For Skyward, the problem was the triage wall. Underwriters were spending hours manually working through submissions just to determine basic appetite fit.

For Zurich it was starting with a use case which underwriters didn’t like doing: documentation.

"We had a healthcare risk where the underwriter got about a 75-page submission. It wasn't until page 56 to 59 that they realized the submission should not be covered since it was out of appetite."

─Jim Mormile @Skyward Specialty

Step 2: Prove It Works

Before scaling, you need a few signals that it's actually working, but that doesn't always come from a dashboard. Both Jim and Amy found that meaningful early indicators were user feedback.

For Jim, it was a veteran underwriter pulling him aside unprompted.

"The anecdotal evidence that really made us realize it was working: it was a veteran underwriter that came up to me and said, '[Sixfold] actually changed my day. It sped up my work progress and workflow'"

─Jim Mormile @Skyward Specialty

The follow-up signal was equally telling “underwriters stopped asking whether they should use Sixfold and started asking when it was coming to their line of business.” That's when Skyward knew it was time to expand.

Step 3: Map Your Expansion

Scaling AI isn't a single rollout but it's a series of decisions about sequencing, readiness, and change management. Both organizations took a structured approach, but in different ways.

Skyward scored each of their 16 lines of business across criteria like guideline robustness, process standardization, underwriting complexity, and, critically, how tech-forward the underwriting team was. They then hand-picked early adopters rather than opening the floodgates.

Zurich took a geographic approach, piloting across four offices before expanding countrywide, and intentionally included underwriters at different levels of tech comfort so they could anticipate resistance before it became a problem at scale.

Both teams also addressed job security fears head-on.

"It's less about the underwriting role becoming irrelevant, and more about if you handle a $10M book of business versus a $20M book of business."

─Amy Nelsen @Zurich North America

Jim's approach was to be explicit about what AI wouldn't do: no automated decision-making, no replacing the underwriter's final judgment. The framing was always about giving underwriters better information, not replacing their expertise.

Step 4: Roll Out in Phases

Both teams learned that trying to do too much too fast creates fatigue, and fatigue kills adoption. Skyward actually hit pause on one line of business after underwriters started showing signs of frustration. Rather than pushing through, leadership made the call to step back.

A few months later, that team came back ready to re-engage, pulled in by the FOMO of watching other lines of business benefit.

On the flip side, Skyward's phased approach led to real efficiency gains in deployment speed. Their first two lines of business took 12 to 14 weeks from introduction to production. By the time they were rolling out subsequent lines, they'd cut that down to eight weeks.

Amy's experience at Zurich echoed the same takeaway on speed:

"The most amazing part is how fast you can get from just a concept to deployment with Sixfold."

─Amy Nelsen @Zurich North America

Step 5: Assess Impact

Once AI is live in production, measuring success means looking at two things in parallel: business outcomes and output quality. Neither alone tells the full story.

For Jim at Skyward, that meant tracking time to quote and number of quotes out the door, but also running a monthly accuracy review and a sentiment score across underwriting teams.

"We constantly look at both ends of the spectrum: the ROI metrics, and whether the accuracy is there to give our underwriters the information they need to make better decisions."

─Jim Mormile @Skyward Specialty

Amy's approach at Zurich was similar, and revealing in its simplicity. Sixfold's success isn't measured separately from the business; it's measured through the business. When AI becomes embedded enough that you stop evaluating it as a standalone tool and start measuring it through your core business metrics, that's when you know it's really working.

"We're measuring our business outcomes like how many quotes underwriters are getting out the door and how much business have they bound. We also meet with Sixfold every month to look at quality metrics."

─Amy Nelsen @Zurich North America

The Final Wisdom

Scaling AI in underwriting isn't primarily a technology challenge, it's a people and process challenge. Both Amy and Jim closed with the same underlying message: be prepared for that thing change quickly in the world of AI, don't be afraid to fail, and don't stay stuck in proof-of-concept mode forever.

The insurers that will succed at scaling AI are the ones that move deliberately, learn fast, and bring their underwriters along for the ride from day one.

Watch the video recording of the entire webinar here.

FEATURED REPORTS

Business Intelligence Reports

Data-driven reports for smarter business decisions.