Sixfold Content

Product Update

Meet the First AI Accuracy Validator Built for Insurance Underwriting

Today, we’re excited to introduce the first-ever AI Accuracy Validator built for insurance underwriting. This application provides customers with a transparent and comprehensive way to evaluate Sixfold’s accuracy—reinforcing our commitment to bring reliable and trustworthy risk assessments to underwriters.

Stay informed, gain insights, and elevate your understanding of AI's role in the insurance industry with our comprehensive collection of articles, guides, and more.

The Role of AI and IDP in Underwriting’s Future

Underwriting faces a data challenge, with manual processes consuming up to 40% of underwriters’ time. While IDP tools digitize data, underwriting AI goes further—providing actionable insights and enabling smarter risk analysis to improve efficiency and accuracy.

Insurance underwriting has long struggled with a data challenge: finding a way to handle the daily flood of information quickly and accurately. Why? Because the ability to process data significantly impacts the profitability and growth of insurers.

If this sounds familiar, here’s some good news: there’s now a better way to tackle these challenges. Underwriting-focused AI is transforming underwriting by processing complex data, providing risk summaries, and delivering tailored risk recommendations—all in ways that were previously unimaginable.

Most of the information underwriters need to make an underwriting decision comes in a mix of structured, semi-structured, and unstructured formats.

Most of the information underwriters need to make an underwriting decision comes in a mix of structured, semi-structured, and unstructured formats, including various types of documents such as broker emails, application forms, and loss runs. This data is often handled manually, consuming 30% to 40% of underwriters' time and ultimately impacting Gross Written Premium (McKinsey & Company).

To address this, insurers have long sought technological solutions. Intelligent Document Processing (IDP) tools were a key step forward, using technologies like Optical Character Recognition (OCR) to extract and organize data. However, while IDP helps digitize information, it doesn’t fully solve the problem of turning data into actionable insights.

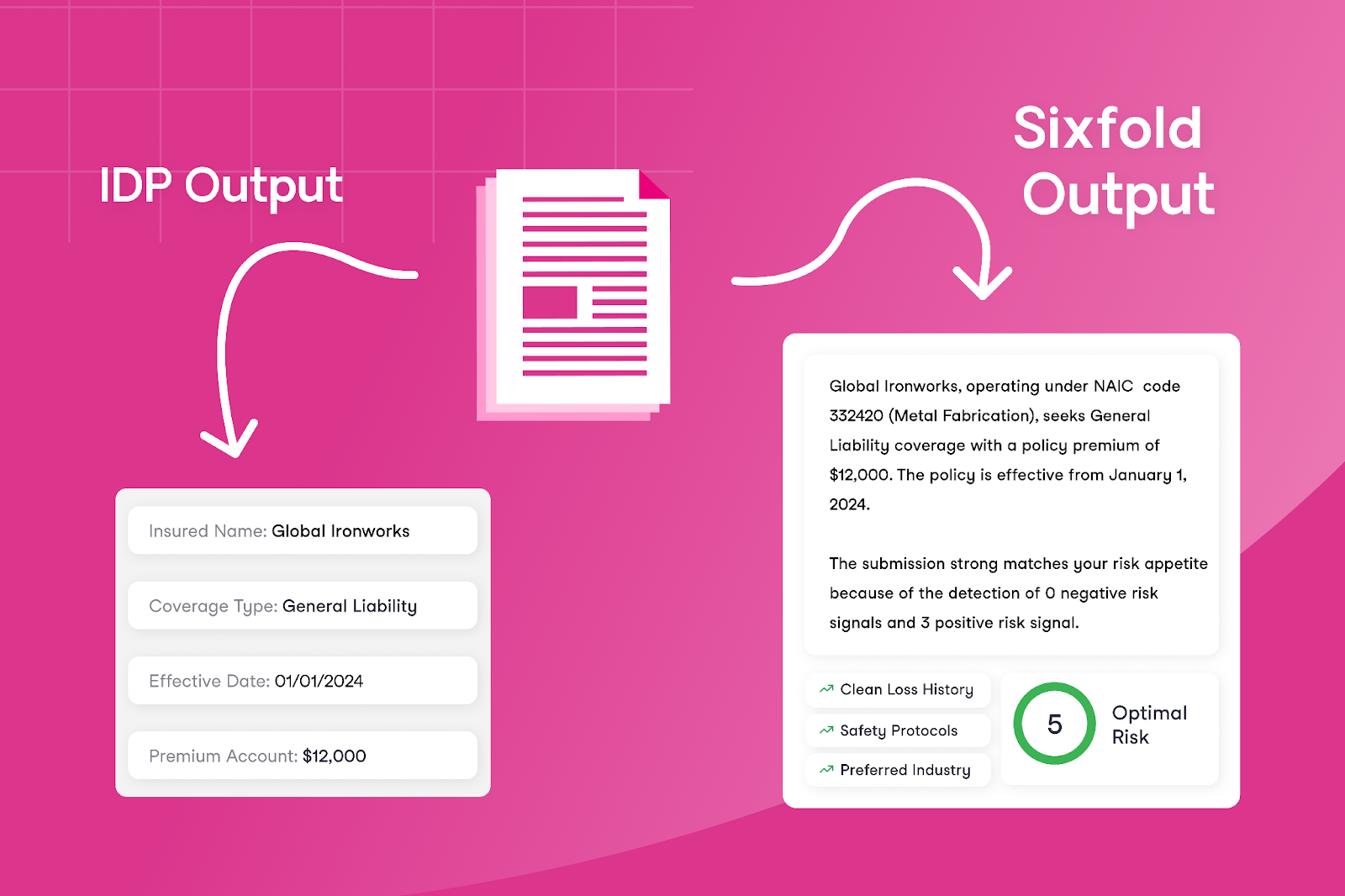

Comparing IDP and Underwriting AI

IDPs have been the predominant way of approaching underwriting efficiency, primarily used by insurers to automate tasks in underwriting, policy administration, and claims processing. These tools focus on converting generic documents into structured data by capturing text, classifying document types, and extracting key fields, offering a general solution across different industries.

Digitizing documents - sounds like a no-brainer right? But, what happens if you go beyond simply digitizing documents?

Digitizing documents - sounds like a no-brainer right? But, what happens if you go beyond simply digitizing documents? Underwriting AI makes this possible by offering a new approach to improving efficiency and accuracy for underwriters — by not just bringing in all the data but also generating risk analysis and actionable insights. AI empower underwriting teams to focus only on the information that truly matters for underwriting decisions.

The difference between these technologies becomes clearer when comparing the outputs of underwriting AI with traditional technologies like IDPs. Instead of only extracting every data field, risk assessment solutions that leverage LLMs—like Sixfold— use their trained understanding of what underwriters care about to decide what risk information to summarize and present to underwriters.

This approach differs from IDP by focusing on presenting contextual insights for underwriters, such as risk patterns and appetite alignment. Instead of only providing the extracted data, it highlights key information that directly supports faster decision-making.

Different Approaches to Accuracy

By now, we already know that IDPs and AI solutions aim to improve efficiency and save underwriters time. But what about accuracy? Accuracy is the key component of a successful underwriting decision, which is why evaluating it is so important for tools focused on supporting underwriters. Let’s highlight the differences between what matters for IDP versus AI tools in terms of accuracy.

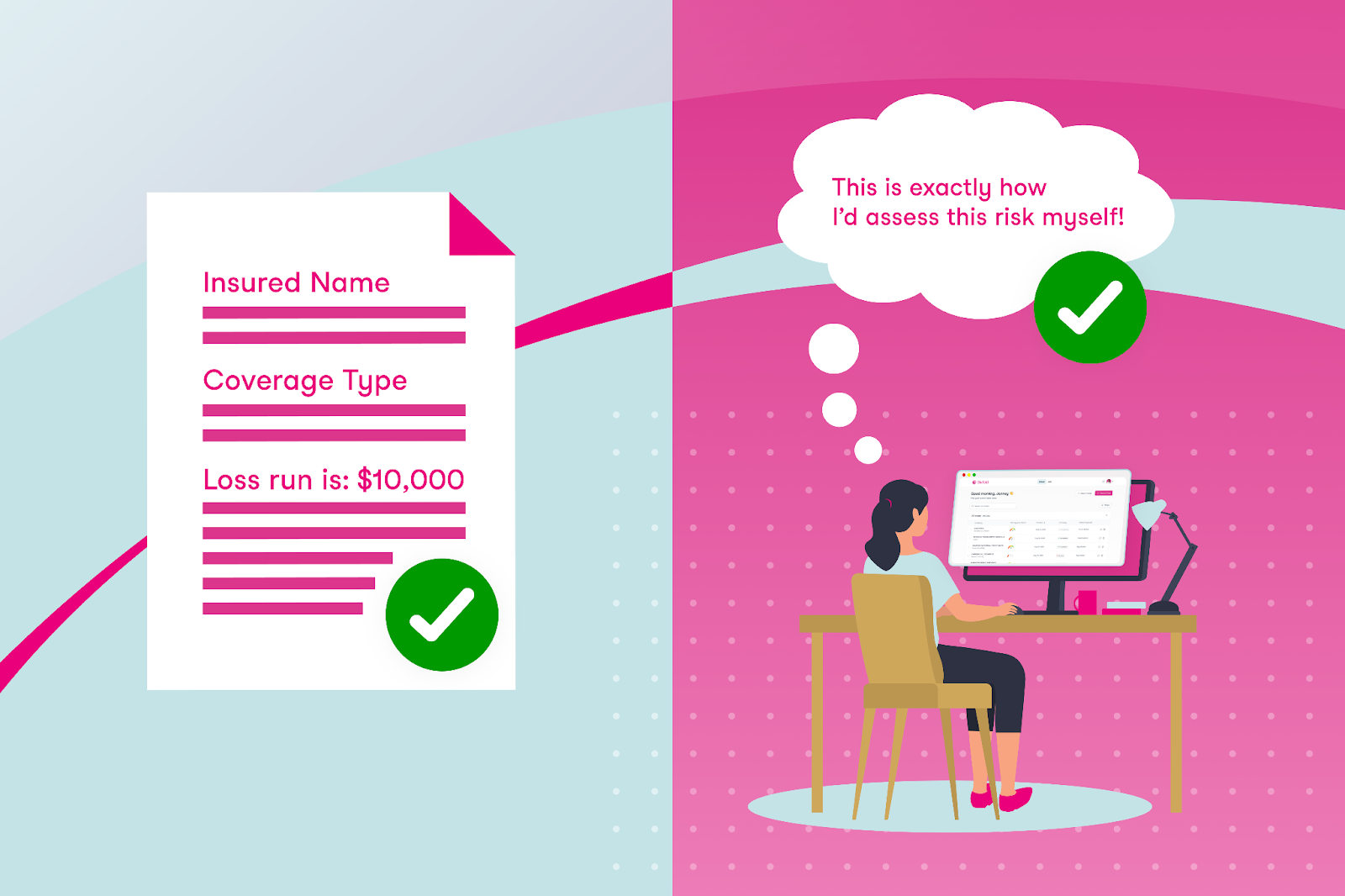

IDPs - Accuracy is about precise field extraction

IDPs focus on data processing, so their success is measured by how accurately they extract each text field. This makes sense given their role in automating structured data collection. If the data field in a document says “Loss run is: $10,000” and the IDP extracts “$1,000” then it’s easy to say that it was not an accurate extraction.

Underwriting AI - Accuracy is about human-like reasoning

The accuracy of underwriting AI lies in their ability to align reasoning and output with the task, presenting the key risk data underwriters themselves would choose to prioritize. Evaluating AI accuracy therefore means determining how closely the AI mirrors an underwriter in assessing risk submissions.

The reality is that, with AI and data extraction, small mistakes don't matter much. For example, consider a loss run: as an underwriter, what’s important isn’t necessarily every individual line or small loss but rather recognizing patterns — such as total losses exceeding $10,000 within a specific timeframe or recurring trends in certain types of losses. An underwriting AI can uncover these insights by focusing on significant trends and aggregating relevant data, looking at a case the same way a human would do.

How to Choose the Right Tool?

The answer isn’t as simple as this or that. There are successful examples of insurers using one, the other, or even both solutions, depending on their needs.

IDP can be a great tool for extracting fields like names, addresses, and other key information from documents to feed into downstream systems. Meanwhile, AI-focused technologies like Sixfold are great for risk analysis. It’s important to note that a company doesn’t need to have an IDP solution in place to adopt an AI solution like Sixfold – these technologies can work independently.

If your goal is to reduce the manual workload for underwriters, start by identifying the exact inefficiencies.

To decide which use of technology is the best fit for your underwriting needs – whether it’s one of these solutions or potentially both – consider the specific challenges you’re addressing. If your goal is to reduce the manual workload for underwriters, start by identifying the exact inefficiencies. For instance, if the issue is that underwriters spend a significant amount of time manually extracting text fields from one document and re-entering it into another system, IDP could be a good option — it’s built for that kind of work, what you are paying for is extracting fields.

However, if you want to address larger inefficiencies in the underwriting process — such as reducing the total time it takes for underwriters to respond to customers — you’ll need to tackle bigger bottlenecks. While it’s true that manual data entry is a challenge, much more of underwriters’ time is spent on manual research tasks such as reviewing hundreds of pages looking for specific risk data, or finding the right NAICS code for a business. These are areas where underwriting AI would be better suited.

So, What's Next for Underwriting?

IDP can efficiently digitize key information, but it doesn’t solve the broader challenges of manual inefficiencies that underwriters deal with daily.

Imagine a future in underwriting where IDP effortlessly extracts core data fields essential for accurate rating, ensuring consistent and reliable input into downstream systems. Meanwhile, specialized underwriting AI takes on the rest of the heavy manual lifting—matching risks to appetite, delivering critical context for decisions, and significantly reducing the time it takes to quote.

By combining the OCR capabilities of IDP with the intelligence of underwriting AI, insurers can make underwriting a lot less manual.

By combining the OCR capabilities of IDP with the intelligence of underwriting AI, insurers can make underwriting a lot less manual. These tools promise a 2025 where underwriters spend less time on repetitive tasks and more time making smart risk decisions.

This post was originally posted on LinkedIn

Just Launched: Beyond the Policy 🚀

I’m super excited to introduce Beyond the Policy, Sixfold’s innovation hub designed exclusively for underwriters.

Here at Sixfold, we talk to underwriters all the time—on calls, in meetings, at conferences, and through feedback on everything we create. Why? Because understanding the real challenges underwriters face is at the core of what we do.

From those conversations, we realized there’s no central digital space where underwriters can stay updated on industry innovations, exchange insights, and find tools to grow their careers.

That got us thinking — what if we built that place?

So, we did! I’m super excited to introduce Beyond the Policy, Sixfold’s innovation hub designed exclusively for underwriters. Here’s what you can expect:

Real Stories From the Frontlines

Why? Great advice comes from those who’ve faced the same challenges. That’s why Beyond the Policy features interviews with experienced underwriters who share their perspectives on the challenges and opportunities shaping the industry today. These Q&As provide practical insights, lessons learned, and tips you can apply directly to your own work.

We began our interviews with underwriters from major players like Sompo International and NSM, as well as from smaller MGAs and consultancies, ensuring a diverse (and always personal) perspectives.

AI Crash Course for Underwriting Leaders

Why? AI is transforming underwriting, but getting started can feel overwhelming. To help, we’ve created a crash course specifically for underwriting leaders. It’s designed to provide clear, actionable steps for getting started.

This course brings together insights from top AI experts across diverse fields, including the Head of AI at Sixfold and a Lead AI Counsel at Debevoise & Plimpton. Whether you're new to AI or looking to enhance your approach, this resource is a great starting point.

Will we launch more courses in the future? Absolutely - stay tuned for our next one.

The Best of the Web for Underwriters

Why? The internet is full of information, but finding what’s truly relevant can be a challenge. That’s why we’re doing the work for you. Beyond the Policy features curated content tailored to underwriters, pulling together key industry updates and trends - and updating it frequently. Fresh content, every week!

This week's recommended content includes a great episode from The Insurance Podcast featuring Send (underwriting insurance software) and an article from Deloitte on the insurance outlook in 2025.

Monthly Emails You’ll Actually Want to Read

Why? We know you’re busy, so we’ve packed our monthly emails with the best of what Beyond the Policy has to offer. Expect updates on the latest Q&As, new resources like the AI crash course, and handpicked articles that are worth your time.

These emails are designed to keep you ahead (without adding to your email load).

➡️ Beyond the Policy is now live!

If you’re an underwriter, I invite you to check it out, sign up for our monthly emails and hopefully learn a new thing or two.

Enjoy!

.png)

AXIS Adopts Sixfold’s Purpose-Built AI Solution

Sixfold, the AI solution designed to streamline end-to-end risk assessments for underwriters, announced its partnership with AXIS, a global leader in specialty insurance and reinsurance, a collaboration that has yielded positive results in its initial roll out.

October 18, 2024 - Sixfold, the AI solution designed to streamline end-to-end risk assessments for underwriters, announced its partnership with AXIS, a global leader in specialty insurance and reinsurance, a collaboration that has yielded positive results in its initial rollout. Within the first month of deployment, AXIS underwriters leveraged Sixfold’s solution to improve efficiency, accurately classifying businesses and aligning cases with their risk appetite.

“This partnership is all about leveraging AI to empower our underwriters and even further enhance the service we provide to our customers. We were searching for a solution that could reliably deliver precision, and Sixfold has done just that and more.

The real game-changer has been the time savings—freeing up valuable hours so our underwriters can zero in on the work that drives results while ultimately benefiting the customer” said Josh Fishkind, Head of Innovation at AXIS.

“Our goal is to provide meaningful ROI for all our customers, and AXIS has already begun to see these benefits,” said Alex Schmelkin, Sixfold's Founder & CEO. “We look forward to continuing our partnership as AXIS discovers more ways Sixfold can enhance their underwriting processes.”

Read the full customer story here and check out the Insurance Post article covering our work with AXIS.

%20(1200%20x%20644%20px)%20(3).png)

Sixfold Partners with CyberCube

The partnership with CyberCube aligns with the strategy of utilizing the best data sources to streamline underwriting, keeping insurers ahead as cyber insurance premiums are projected to reach $20 billion by 2025.

In 2025, the cyber risk landscape is expected to become more complex with increasing threats driven by rising privacy violations, data breaches, the rise of AI, and external factors such as emerging regulations. According to Munich Re, the cyber insurance market has nearly tripled in size over the past five years, with global premiums projected to surpass $20 billion by 2025, up from nearly $15 billion in 2023, as reported by CyberSecurity Dive.

Reflecting the rapid market growth and emerging threats, Sixfold has seen increased demand from specialty insurers in the cyber sector and has successfully brought on several industry leaders as customers. "In the near future, cyber policies will become as essential as General Liability or Property & Casualty coverage. Given the world we live in, this shift is inevitable. Cyber policies are poised to become the most specific and highly customized policies available" said Jane Tran, Co-founder & COO at Sixfold.

"In the near future, cyber policies will become as essential as General Liability or Property & Casualty coverage. Given the world we live in, this shift is inevitable. Cyber policies are poised to become the most specific and highly customized policies available"

Empowering Underwriters to Quickly Adapt to New Cyber Risks

As cyber risks grow, the pressure on underwriters to assess risks accurately and expedite the case review process continues to increase. Sixfold’s AI solution for cyber insurance addresses these challenges by securely ingesting each insurer’s underwriting guidelines and aggregating all necessary business information to quickly provide recommendations that align with the carrier’s risk appetite. This capability allows insurers to quickly adjust their risk strategies in response to new cyber threats.

“With Sixfold, insurers can synchronize their underwriting guidelines across the board and adapt quickly. For example, when a new malware threat is identified, you can instantly incorporate it into your risk criteria through Sixfold. This ensures that the entire cyber team factors it into their assessments immediately without needing to learn every detail or the threat or spending hours digging for the right information” said Alex Schmelkin, Founder & CEO of Sixfold.

Besides, effective cyber underwriting demands deep expertise in IT systems, cybersecurity measures, and industry developments. This need for specific expertise presents a significant talent issue for insurers, especially with 50% of the underwriting workforce set to retire by 2028. Sixfold bridges the knowledge gap by instantly providing underwriters with the specialized knowledge they need for accurate risk assessments.

“Underwriters no longer need to be cyber experts; they can rely on Sixfold to spotlight the critical information needed for accurate underwriting decisions. Our platform simplifies the complex world of cyber risk and empowers underwriters to make more confident decisions, faster” said Jane Tran, Co-founder & COO at Sixfold.

Sixfold Partners with CyberCube to Enhance Cyber Risk Assessments

Sixfold has teamed up with CyberCube, the world’s leading analytics provider to quantify cyber risk. This integration of CyberCube's advanced cyber risk analytics with Sixfold's AI underwriting solution enables insurers to achieve faster and more accurate risk assessments. The partnership enhances underwriting efficiency, strengthens regulatory compliance, and offers highly tailored cyber insurance solutions, empowering insurers to stay ahead of the rapidly evolving cyber threat landscape. "The partnership between CyberCube’s comprehensive cyber data and Sixfold’s innovative risk assessment is setting a new standard for the future of underwriting, keeping insurers prepared for new challenges in determining accurate cyber policies.” said Ross Wirth, Head of Partnership and Ecosystem for CyberCube.

"The partnership between CyberCube’s comprehensive cyber data and Sixfold’s innovative risk assessment is setting a new standard for the future of underwriting, keeping insurers prepared for new challenges in determining accurate cyber policies.”

To see our Sixfold speeds up the cyber underwriting process join our upcoming live product demo.

How to Secure AI Compliance in Insurance

Sixfold's CEO and founder, Alex Schmelkin, along with special guests, discusses developments in AI regulation for the U.S. insurance industry and addresses common compliance concerns.

With the rise of AI solutions in the Insurance market, questions around AI regulations and compliance are increasingly at the forefront. Key questions such as “What happens when we use data in the context of AI?” and “What are the key focus areas in the new regulations?” are top of mind for both consumers and industry leaders.

To address these topics, Sixfold’s founder and CEO, Alex Schmelkin, hosted the webinar “How to Secure Your AI Compliance Team’s Approval”. Joined by industry experts Jason D. Lapham, Deputy Commissioner for P&C Insurance for the State of Colorado, and Matt Kelly, Data Strategy & Security Counsel at Debevoise & Plimpton, the discussion provided essential insights into navigating AI regulations and compliance.

Here are the key insights from the session:

AI Regulation Developments: Colorado Leads the Way in the U.S

“There’s a requirement in almost any regulatory regime to protect consumer data. But now, what happens when we start using that data in AI? Are things different?” — Alex Schmelkin

Both nationally and globally, AI regulations are being implemented. In the U.S., Colorado became the first state to pass a law and implement regulations related to AI in the insurance sector. Jason Lapham explained that the key components of this legislation revolve around two major requirements:

- Governance and Risk Management Frameworks: Companies must establish robust frameworks to manage the risks associated with AI and predictive models.

- Quantitative Testing: Businesses must test their AI models to ensure that outcomes generated from non-traditional data sources (e.g., external consumer data) do not lead to unfairly discriminatory results. The legislation also mandates a stakeholder process prior to adopting rules.

Initially, the focus was on life insurance, as it played a critical role in shaping the legislative process. The first regulation, implementing Colorado’s Bill 169, adopted in late 2023, addressed governance and risk management. This regulation applies to life insurers across all practices, and the Regulatory Agency received the first reports this year from companies using predictive models and external consumer data sources.

So, what’s the next move for the first-moving state in terms of AI regulations? Colorado Division of Insurance is developing a framework for quantitative testing to help insurers assess whether their models produce unfairly discriminatory outcomes. Insurers are expected to take action if their models do lead to such outcomes.

Compliance Approach: Develop Governance Programs

“When we’re discussing with clients, we say focus on the operational risk side, and it will get you largely where you need to be for most regulations out there.” — Matt Kelly

With AI regulations differing across U.S. states and globally, companies face challenges. Matt Kelly described how his team at Debevoise & Plimpton navigate these challenges by building a framework that prioritizes managing operational risk related to technology. Their approach involves asking questions such as :

- What AI is being used?

- What risks are associated with its use?

- How is the company governing or mitigating those risks?

By focusing on these questions, companies can develop strong governance programs that align with most regulatory frameworks. Kelly advises clients to center their efforts on addressing operational risks, which takes them a long way toward compliance.

The Four Pillars of AI Compliance

Across different AI regulatory regimes, four common themes emerge:

- Transparency and Accountability: Companies must understand and clearly explain their AI processes. Transparency is a universal requirement.

- Ethical and Fair Usage: Organizations must ensure their AI models do not introduce bias and must be able to demonstrate fairness.

- Consumer Protection: In all regulatory contexts, protecting consumer data is essential. With AI, this extends to ensuring models do not misuse consumer information.

- Governance Structure: Insurance companies are responsible for ensuring that they—and any third-party model providers—comply with AI regulations. While third-party providers play a role, carriers are ultimately accountable.

Matt Kelly emphasizes that insurers can navigate these four themes successfully by establishing the right frameworks and governance structures.

Protection vs. Innovation: Striking the Right Balance

“We tend not to look at innovation as a risk. We see it as aligned with protecting consumers when managed correctly.” — Matt Kelly

Balancing consumer protection with innovation is crucial for insurers. When done correctly, these goals align. Matt noted that the focus should be on leveraging technology to improve services without compromising consumer rights.

One major concern in insurance is unfair discrimination, particularly in how companies categorize risks using AI and consumer data. Regulators ask whether these categorizations are justified based on coverage or risk pool considerations, or whether they are unfairly based on unrelated characteristics. Aligning these concerns with technological innovation can lead to more accurate and fair coverage decisions while ensuring compliance with regulatory standards.

Want to learn more?

Watch the full webinar recording and download Sixfold’s Responsible AI framework for Sixfold’s approach to safe AI usage.

How to Choose an AI Vendor (Who Can Actually Deliver)

Sixfold’s Head of AI explains how to pick the right team to build your AI insurance solution.

Companies of all sizes are actively exploring how emerging AI technologies can overcome longstanding business challenges. Inevitably, they run up against the reality that weathered AI pros like myself have long known: AI ain’t easy. Rather than going it alone, many businesses choose to partner with firms that specialize in building solutions with LLMs. The good news? There are a growing number of AI vendors to pick from, with more popping up all the time. The bad? Discerning if a vendor can deliver what you need isn’t always so straightforward.

It seems like everyone and their little cousin touts the ability to “wrap” custom applications around one of the big-name LLMs. If that’s all they bring to the table, they might help you address simple use cases, but probably won’t have the chops to build and manage complex solutions in heavily regulated industries like insurance. That’s a whole different thing.

So, how can you tell if a prospective vendor can meet your business's needs? In this blog post, I’ll explore some key areas along the AI value chain and propose some questions to ask so you can make an informed decision.

So, how can you tell if a prospective vendor can meet your business's needs? In this blog post, I’ll explore some key areas along the AI value chain and propose some questions to ask so you can make an informed decision.

Input preparation

What you put into your AI system is what you get out of it. Make sure a prospective vendor prioritizes clean data, stored & handled in a secure compliant manner.

You can think of data like a commodity that powered the previous century: oil. You don’t just dig some oil out of the ground and pour it into your gas tank. (Or, I guess you could, but you wouldn’t get far before your engines seized up.) Like oil, data requires multiple rounds of preparation before it can be used.

The value of the output your AI produces is directly related to the quality of the input. Before moving forward with any prospective vendor, ensure they have the means—and indeed, the knowledge—to help you build compliant, secure data workflows from beginning to end.

The value of the output your AI produces is directly related to the quality of the input. Before moving forward with any prospective vendor, ensure they have the means—and indeed, the knowledge—to help you build compliant, secure data workflows from beginning to end.

Here are some points to consider to ensure this is a vendor for you.

Questions to consider:

- How will the data be collected?

Data must be carefully collected to protect privacy and prevent bias. Ensuring that data has been ethically obtained and correctly governed is a point of emphasis for regulators. - How will the data be “cleaned”?

Data needs to be refined and structured in a way that an AI solution can use and interpret. Make sure a prospective vendor understands what types of data are appropriate for your use case and how to prepare it at scale. - How will the data be transferred, stored, and secured?

When developing solutions for complex, highly regulated industries, proof of certification for things like SOC2 and HIPAA are table stakes. Additionally, you’ll want to verify that the vendor uses secure data transfer methods, such as encryption during transit and at rest, to prevent unauthorized access. Also, ensure they effectively track the status of the data over time via robust version control and data lineage systems.

Prompt development

LLMs work best when you make it difficult for them to make mistakes. An AI vendor should understand how to craft prompts to generate business value.

For an AI solution to generate value, it must surface useful information with as little human intermediation as possible. This is achieved by ensuring that every prompt to an LLM includes all guidance, data access, and guardrails necessary to generate a high-quality return. Things like:

1. Industry-specific content to guide results

2. Phrasing that reflects informed insight into the domain

3. Precise instructions on the structure of the result being sought

Your vendor will need to demonstrate they understand the capabilities and limitations of AI and can provide insights on how to structure LLM conversations to extract maximum value. Here are some points to review with a prospective partner to ensure they have the means—and better yet, a history—of value-oriented prompt engineering.

Questions to consider:

- How do they build prompts, and what domain-specific knowledge do they have?

Technical acumen is one thing, but does the vendor understand the specific needs of your industry? It’s one thing to ask an LLM to plan out a fun afternoon at the beach, it’s another thing to have it understand if, for example, family-owned restaurants align with a home insurer's risk appetite, or not. - What methods are used to select material included in the context window?

You should understand the vendor’s criteria for selecting contextually relevant information and how they ensure this information is timely and accurate. Ask what processes they use to filter and prioritize the most pertinent data for inclusion in prompts. - How often, and in what ways are prompts updated over time? Are these changes tracked?

Learn about their schedule for reviewing and updating prompts to keep them aligned with the latest industry trends and data. Ensure they have a system for tracking changes to prompts, including version history and impact analysis, to maintain transparency and continuous improvement. - What methods are used to evaluate the results of prompts, and to compare the results to prior versions when changes are made?

Ask about their evaluation metrics and benchmarks for assessing prompt performance, including accuracy, relevance, and consistency. Understand their process for A/B testing new prompt versions and how they compare the results to previous versions to ensure improvements.

Output control

Non-deterministic AI systems act in unpredictable ways. A quality vendor should know how to measure misaligned behaviors, as well as how to address them.

The value of the output your AI produces is directly related to the quality of the input. Before moving forward with any prospective vendor, ensure they have the means—and indeed, the knowledge—to help you build compliant, secure data workflows from beginning to end. Ask an LLM the same question 10 times and you might get 10 different responses. The goal is to generate 10 accurate, useful answers. Achieving this requires putting as much care into reviewing the system’s output as you do into preparing the input.

Ask an LLM the same question 10 times and you might get 10 different responses. The goal is to generate 10 accurate, useful answers. Achieving this requires putting as much care into reviewing the system’s output as you do into preparing the input.

Continuous monitoring and tweaking are necessary to adapt your system to accommodate new data and evolving requirements. Here are some questions to explore when evaluating a vendor’s approach to scaled output control.

Questions to consider:

- What evals will you run?

Inquire about their evaluation frameworks, including both automated and manual assessments, to ensure outputs meet quality standards. Learn about the specific metrics they use to evaluate outputs, such as precision, recall, and F1 score, as well as checks for hallucinations and biases. - What role will human experts play in this process?

Verify that human subject matter experts are involved in reviewing and validating AI outputs to ensure they are contextually appropriate and accurate. Ask about their process for incorporating expert feedback into continuous improvement cycles for the AI system. - How often will you review overall results, and what metrics will you use to guide refinement and improvement?

Get a handle on their schedule for regular reviews and audits of AI outputs to ensure ongoing quality and relevance. Inquire about the key performance indicators (KPIs) and metrics they use to monitor and refine the AI system, such as user satisfaction scores, error rates, and feedback loops.

Transparency

Not only does visibility allow you to properly evaluate an AI’s performance, it’s increasingly required by regulators as a means to address system bias.

Transparency is crucial for every step from data preparation to prompt development and output review. You cannot evaluate what you cannot see. To maintain the highest possible standards, every AI vendor should be prepared to provide a window into every step under their control.

Questions to consider:

- Can you provide clear documentation of your processes and methods?

Ensure that the vendor offers comprehensive and understandable documentation covering all aspects of their AI processes, from data collection to output generation. Ask for examples of their documentation to assess its clarity and completeness. - Can you demonstrate every point at which they interact with an LLM, and provide a complete trail of what information was exchanged?

Verify that the vendor maintains detailed logs and records of interactions with the LLM, including data inputs, prompts, and outputs. Ensure they can provide audit trails that detail the flow of information through their systems, which is crucial for regulatory compliance and troubleshooting. - Will you provide a routine report about their evaluations and measuring for potential bias?

Inquire about their regular reporting practices, including how often they produce reports on AI performance, bias detection, and mitigation efforts. Ask to see examples of these reports to evaluate their thoroughness and transparency in addressing potential biases and other issues.

At Sixfold, we’ve created a Responsible AI framework for prospects and customers to showcase our ongoing transparency work.

In Summary

AI has the potential to overcome challenges that have been holding businesses back for decades. If you haven’t started your AI journey, now’s the time to get started. Partnering with an AI vendor can help you identify use cases ripe for transformation and provide the skills to get you there.

I hope that this checklist helped you identify which vendor has the right combination of technical know-how, industry expertise, and regulatory awareness to get your business where it needs to be.